I am probably unqualified to speak about this, as I am using an RX 550 low profile and a 768P monitor and almost never play newer titles, but I want to kickstart a discussion, so hear me out.

The push for more realistic graphics was ongoing for longer than most of us can remember, and it made sense for most of its lifespan, as anyone who looked at an older game can confirm - I am a person who has fun making fun of weird looking 3D people.

But I feel games' graphics have reached the point of diminishing returns, AAA studios of today spend millions of dollars just to match the graphics' level of their previous titles - often sacrificing other, more important things on the way, and that people are unnecessarily spending lots of money on electricity consuming heat generating GPUs.

I understand getting an expensive GPU for high resolution, high refresh rate gaming but for 1080P? you shouldn't need anything more powerful than a 1080 TI for years. I think game studios should just slow down their graphical improvements, as they are unnecessary - in my opinion - and just prevent people with lower end systems from enjoying games, and who knows, maybe we will start seeing 50 watt gaming GPUs being viable and capable of running games at medium/high settings, going for cheap - even iGPUs render good graphics now.

TLDR: why pay for more and hurt the environment with higher power consumption when what we have is enough - and possibly overkill.

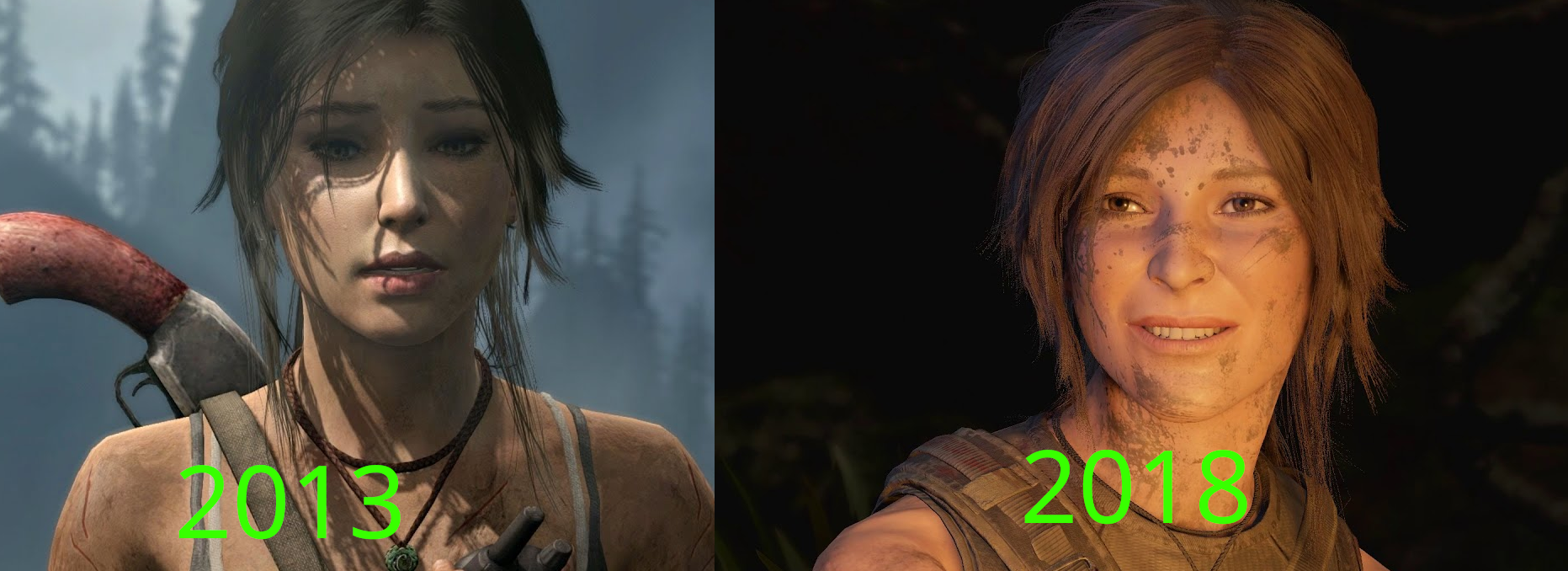

Note: it would be insane of me to claim that there is not a big difference between both pictures - Tomb Raider 2013 Vs Shadow of the Tomb raider 2018 - but can you really call either of them bad, especially the right picture (5 years old)?

Note 2: this is not much more that a discussion starter that is unlikely to evolve into something larger.

I've noticed this a lot in comparisons claiming to show that graphics quality has regressed (either over time, or from an earlier demo reel of the same game), where the person trying to make the point cherry-picks drastically different lighting or atmospheric scenarios that put the later image in a bad light. Like, no crap Lara looks better in the 2013 image, she's lit from an angle that highlights her facial features and inexplicably wearing makeup while in the midst of a jungle adventure. The Shadow of the Tomb Raider image, by comparison, is of a dirty-faced Lara pulling a face while being lit from an unflattering angle by campfire. Compositionally, of course the first image is prettier -- but as you point out, the lack of effective subsurface scattering in the Tomb Raider 2013 skin shader is painfully apparent versus SofTR. The newer image is more realistic, even if it's not as flattering.