this post was submitted on 08 Jul 2024

824 points (96.8% liked)

Science Memes

15747 readers

3016 users here now

Welcome to c/science_memes @ Mander.xyz!

A place for majestic STEMLORD peacocking, as well as memes about the realities of working in a lab.

Rules

- Don't throw mud. Behave like an intellectual and remember the human.

- Keep it rooted (on topic).

- No spam.

- Infographics welcome, get schooled.

This is a science community. We use the Dawkins definition of meme.

Research Committee

Other Mander Communities

Science and Research

Biology and Life Sciences

- !abiogenesis@mander.xyz

- !animal-behavior@mander.xyz

- !anthropology@mander.xyz

- !arachnology@mander.xyz

- !balconygardening@slrpnk.net

- !biodiversity@mander.xyz

- !biology@mander.xyz

- !biophysics@mander.xyz

- !botany@mander.xyz

- !ecology@mander.xyz

- !entomology@mander.xyz

- !fermentation@mander.xyz

- !herpetology@mander.xyz

- !houseplants@mander.xyz

- !medicine@mander.xyz

- !microscopy@mander.xyz

- !mycology@mander.xyz

- !nudibranchs@mander.xyz

- !nutrition@mander.xyz

- !palaeoecology@mander.xyz

- !palaeontology@mander.xyz

- !photosynthesis@mander.xyz

- !plantid@mander.xyz

- !plants@mander.xyz

- !reptiles and amphibians@mander.xyz

Physical Sciences

- !astronomy@mander.xyz

- !chemistry@mander.xyz

- !earthscience@mander.xyz

- !geography@mander.xyz

- !geospatial@mander.xyz

- !nuclear@mander.xyz

- !physics@mander.xyz

- !quantum-computing@mander.xyz

- !spectroscopy@mander.xyz

Humanities and Social Sciences

Practical and Applied Sciences

- !exercise-and sports-science@mander.xyz

- !gardening@mander.xyz

- !self sufficiency@mander.xyz

- !soilscience@slrpnk.net

- !terrariums@mander.xyz

- !timelapse@mander.xyz

Memes

Miscellaneous

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

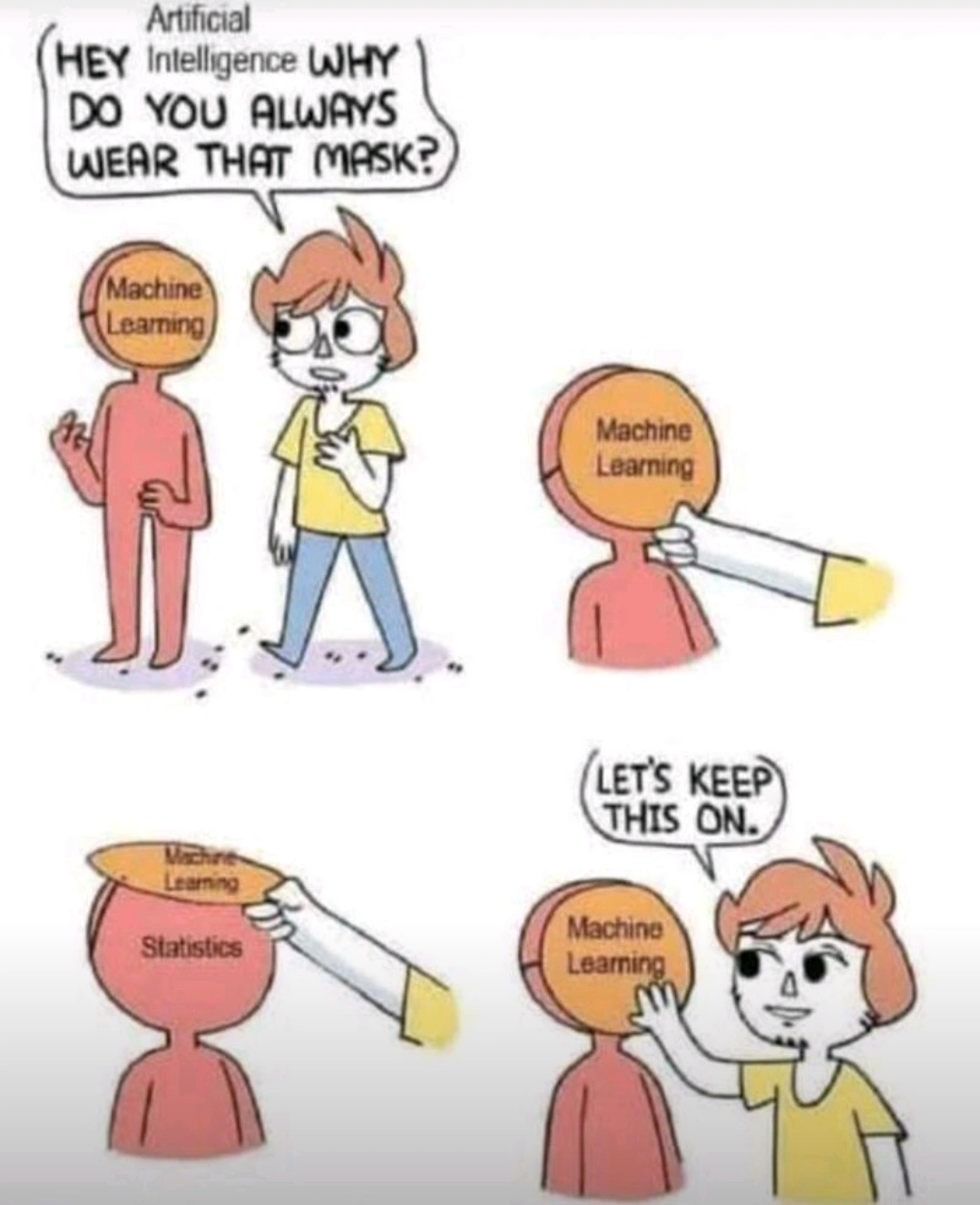

My dude it's math all the way down. Brains are not magic.

We don’t need to understand cognition, nor for it to work the same as machine learning models, to say it’s essentially a statistical model

It’s enough to say that cognition is a black box process that takes sensory inputs to grow and learn, producing outputs like muscle commands.

You can abstract everything down to that level, doesn't make it any more right.

Yes, that's physics. We abstract things down to their constituent parts, to figure out what they are made up of, and how they work. Human brains aren't straightforward computers, so they must rely on statistics if there is nothing non-physical (a "soul" or something).

I'm not saying we understand the brain perfectly, but everything we learn about it will follow logic and math.

I agree experience is incalculable but not because it is some special immaterial substance but because experience just is objective reality from a particular context frame. I can do all the calculations I want on a piece of paper describing the properties of fire, but the paper it's written on won't suddenly burst into flames. A description of an object will never converge into a real object, and by no means will descriptions of reality ever become reality itself. The notion that experience is incalculable is just uninteresting. Of course, we can say the same about the wave function. We use it as a tool to predict where we will see real particles. You also cannot compute the real particles from the wave function either because it's not a real entity but a description of relationships between observations (i.e. experiences) of real things.

(working with the assumption we mean stuff like ChatGPT) mKay... Tho math and logic is A LOT more than just statistics. At no point did we prove that statistics alone is enough to reach the point of cognition. I'd argue no statistical model can ever reach cognition, simply because it averages too much. The input we train it on is also fundamentally flawed. Feeding it only text skips the entire thinking and processing step of creating an answer. It literally just take texts and predicts on previous answers what's the most likely text. It's literally incapable of generating or reasoning in any other way then was already spelled out somewhere in the dataset. At BEST, it's a chat simulator (or dare I say...language model?), it's nowhere near an inteligence emulator in any capacity.