I am probably unqualified to speak about this, as I am using an RX 550 low profile and a 768P monitor and almost never play newer titles, but I want to kickstart a discussion, so hear me out.

The push for more realistic graphics was ongoing for longer than most of us can remember, and it made sense for most of its lifespan, as anyone who looked at an older game can confirm - I am a person who has fun making fun of weird looking 3D people.

But I feel games' graphics have reached the point of diminishing returns, AAA studios of today spend millions of dollars just to match the graphics' level of their previous titles - often sacrificing other, more important things on the way, and that people are unnecessarily spending lots of money on electricity consuming heat generating GPUs.

I understand getting an expensive GPU for high resolution, high refresh rate gaming but for 1080P? you shouldn't need anything more powerful than a 1080 TI for years. I think game studios should just slow down their graphical improvements, as they are unnecessary - in my opinion - and just prevent people with lower end systems from enjoying games, and who knows, maybe we will start seeing 50 watt gaming GPUs being viable and capable of running games at medium/high settings, going for cheap - even iGPUs render good graphics now.

TLDR: why pay for more and hurt the environment with higher power consumption when what we have is enough - and possibly overkill.

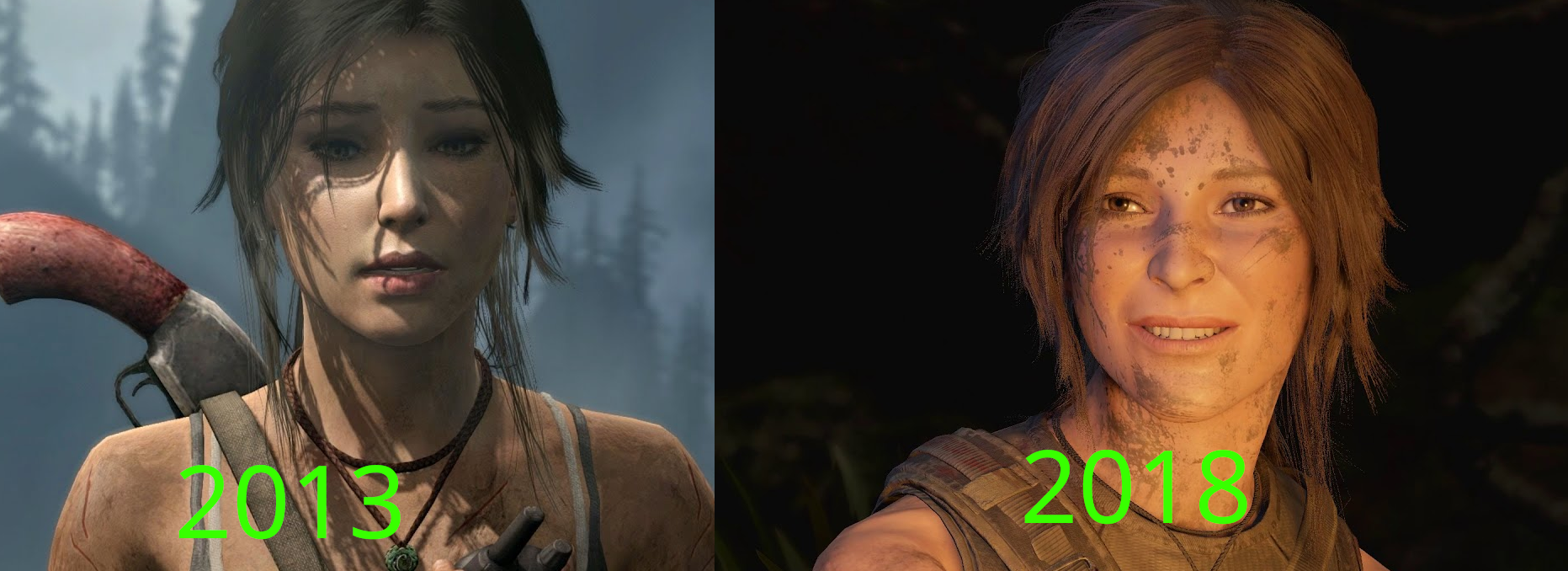

Note: it would be insane of me to claim that there is not a big difference between both pictures - Tomb Raider 2013 Vs Shadow of the Tomb raider 2018 - but can you really call either of them bad, especially the right picture (5 years old)?

Note 2: this is not much more that a discussion starter that is unlikely to evolve into something larger.

Yeah, I agree with everything you said. But what I was trying to say is that it is not all of the graphics push that are hurting production, I believe that on this generation alone we have many new graphics techniques that are aiming to improve image quality at the same time that it takes the load out of the devs. Just look at Remnant II that has the graphical fidelity of a AAA but the budget of a AA. Also, some of the production time is increasing due to feature creep that a lot of games have. Every new game has to have millions of fetch quests, a colossal open world map, skill trees, online mode, crafting, looting system etc. etc. Even if it makes no sense for the game to have it. Almost every single game mentioned on this thread suffers from this. With Batman being notorious for their Riddler trophies, The Witcher having more question marks on the map than an actual map, and Assassin's Creed... Well, do I even need to mention it? So the production time increase is not all the fault of the increase in graphical fidelity.