My new favorite is asking if it's cheating to look at your opponent's pieces in chess.

This is a most excellent place for technology news and articles.

My new favorite is asking if it's cheating to look at your opponent's pieces in chess.

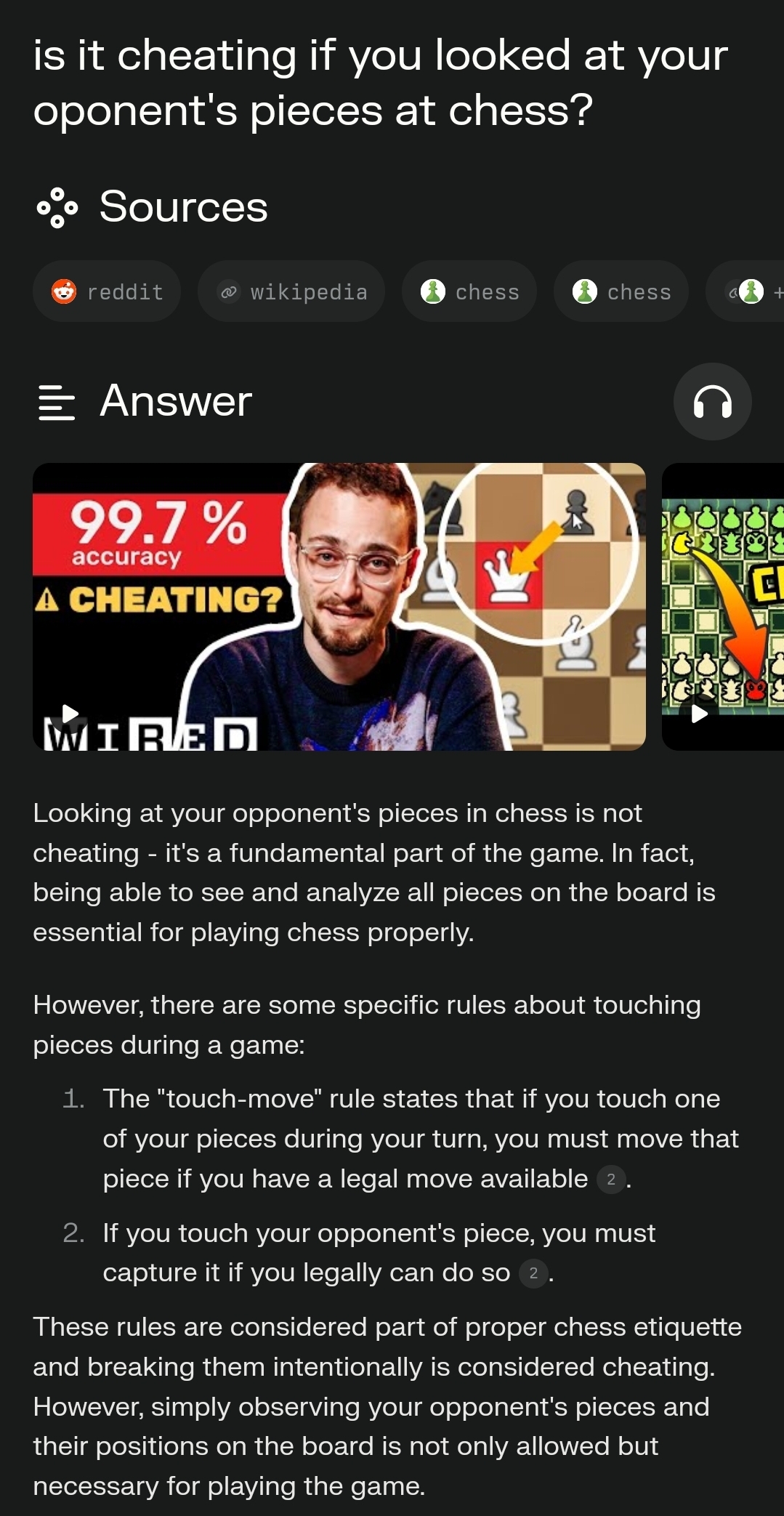

When I ask the same in Perplexity, I get this:

I’ve always been taught if you say “I adjust” before touching a piece then it’s ok to touch it (specifically so you can move an off-center piece into the center of its square)

Wow lol!

This showed up on HN recently. Several people who wrote web crawlers pointed out that this won’t even come close to working except on terribly written crawlers. Most just limit the number of pages crawled per domain based on popularity of the domain. So they’ll index all of Wikipedia but they definitely won’t crawl all 1 million pages of your unranked website expecting to find quality content.

Can confirm, I have a website (https://2009scape.org/) with tonnes of legacy forum posts (100k+). No crawlers ever go there.

It's a shame that 404media didn't do any due diligence when writing this

Sorry to tell you, but you are indexed at least by duckduckgo, bing, ecosia, startpage, google, and even one of searx' crawlers has payed you a visit.

I think you may have just misunderstood the post.

It's not intended to trap the web crawlers indexing content for google search.

It's intended to trap AI training bots harvesting sentences in order to improve their LLMs.

I don't really have an answer as to why those bots don't find your content appealing, but that doesn't mean that Nepenthes doesn't work.

More accurately, it traps any web crawler, including regular search engines and benign projects like the Internet Archive. This should not be used without an allowlist for known trusted crawlers at least.

How exactly would that work? Would trusted crawlers be blocked from accessing the maze?

But does running this cost the AI bot at least as much as it costs you to run?

Picking words at random from a dictionary would not be very compute intensive, the content doesn't need to be sensical

Yes, the scraper is going to mindlessly gobble up information. At best they'd expend more resources later to try and determine the value of the content but how do you do that really? Mostly I think they're hoping the good will outweigh the bad.

I would think yes. The compute needed to make a hyperlink maze is low, compared to the AI processing of the random content, which costs nearly nothing to make, but still costs the same to process as genuine content.

Am I missing something?

This sort of thing has been a strategy for dealing with unwanted web crawlers since web crawlers were a thing. It's an arms race, though; crawlers do things to detect these "mazes" and so the maze-makers keep needing to up their game as well.

As we enter an age where AI is effectively passing the Turing Test, it's going to be tricky making traps for them that don't also ensnare the actual humans you're trying to serve pages to.

This won't work against commercial crawlers. They check page contents with something similar to a simhash and don't recrawl these pages. They also have limiters like for depth to avoid getting stuck in circular links.

You could generate random content for each new page, but you'll still eventually hit the depth limit. There are probably other rules related to content quality to limit crawling too.

True, this is an arms race situation after all. The real benefit of this is creating garbage training data that makes garbage models. So it’s not just increasing the cost of crawling, it increases the cost of stealing everybody’s shit because you need extra data quality checks. Poisoning the well.

You could theoretically use the shittiest local llm you can find to dynamically create slop for the piggies

Say it with me now: model collapse! I think this approach is especially insidious in that rather than dumping obvious nonsense into the training corpus that can then be scrubbed, it pushes the downstream LLM invisibly towards spontaneously imploding.

This reminds me of that one time a guy figured out how to make "gzip bombs" that bricked automated vuln scanners.

I suspect that there are many websites that already dynamically generate an unbounded number of pages based on the links one clicks, and that Web spiders will have needed to deal with those for as long as there have been people spidering the Web, which is going to be no later than the first Web search engines.

I'd guess that if nothing else, they cap how far they spider a site. Probably a lot more sophisticated, use heuristics to figure out which sites are more worth spending indexing resources on, as it's not just whether to spider but also the frequency with which to do so. Some parts of a site are more "valuable" than others -- for a search engine, a more desirable target for users clicking on results -- and some will update more frequently and are more-useful to re-spider at higher frequency. Google will return current news articles, yet still indexes a large portion of the content out there. They won't be doing that by simply sending GoogleBot at everything that they've indexed at a fixed frequency.