I feel like we need to talk about Lemmy's massive tankie censorship problem. A lot of popular lemmy communities are hosted on lemmy.ml. It's been well known for a while that the admins/mods of that instance have, let's say, rather extremist and onesided political views. In short, they're what's colloquially referred to as tankies. This wouldn't be much of an issue if they didn't regularly abuse their admin/mod status to censor and silence people who dissent with their political beliefs and for example, post things critical of China, Russia, the USSR, socialism, ...

As an example, there was a thread today about the anniversary of the Tiananmen Massacre. When I was reading it, there were mostly posts critical of China in the thread and some whataboutist/denialist replies critical of the USA and the west. In terms of votes, the posts critical of China were definitely getting the most support.

I posted a comment in this thread linking to "https://archive.ph/2020.07.12-074312/https://imgur.com/a/AIIbbPs" (WARNING: graphical content), which describes aspects of the atrocities that aren't widely known even in the West, and supporting evidence. My comment was promptly removed for violating the "Be nice and civil" rule. When I looked back at the thread, I noticed that all posts critical of China had been removed while the whataboutist and denialist comments were left in place.

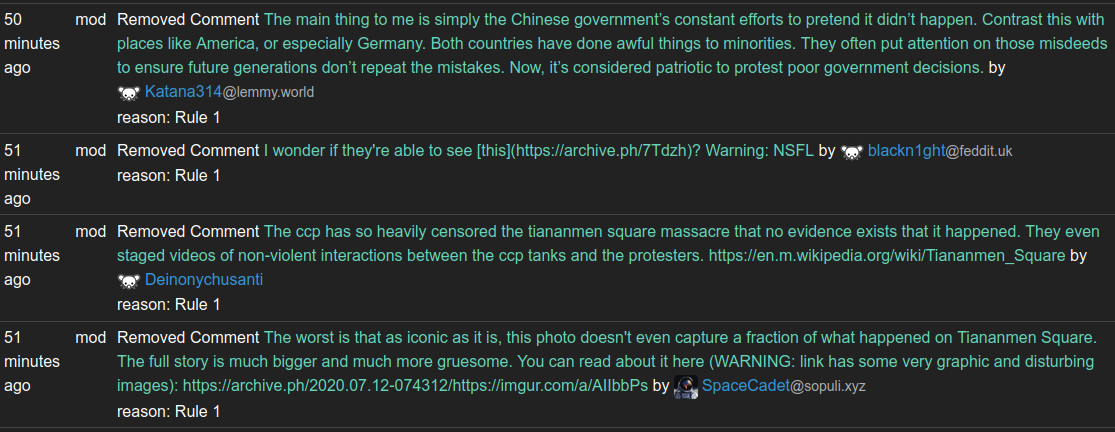

This is what the modlog of the instance looks like:

Definitely a trend there wouldn't you say?

When I called them out on their one sided censorship, with a screenshot of the modlog above, I promptly received a community ban on all communities on lemmy.ml that I had ever participated in.

Proof:

So many of you will now probably think something like: "So what, it's the fediverse, you can use another instance."

The problem with this reasoning is that many of the popular communities are actually on lemmy.ml, and they're not so easy to replace. I mean, in terms of content and engagement lemmy is already a pretty small place as it is. So it's rather pointless sitting for example in /c/linux@some.random.other.instance.world where there's nobody to discuss anything with.

I'm not sure if there's a solution here, but I'd like to urge people to avoid lemmy.ml hosted communities in favor of communities on more reasonable instances.

I agree with the facts here but have a slightly different conclusion. This is a problem that exists on many similar platforms like Reddit, etc. If you give mods or admins unlimited power over their users, it is an almost foregone conclusion that it will be abused in some circumstances. While Lemmy.ml is perhaps the perfect storm of a bad example, I’ve seen examples of abuses of mod power from almost every community on both Lemmy and Reddit.

So how do we fix it? Migrating to different communities or instances can sometimes help, but the potential for abuse remains. Having more options for active communities and making migration easier is a step in the right direction. Despite its flaws, Lemmy is an improvement in this respect because its federated nature allows more choice in who has power over you, but the problem remains.

In my view the internet has always worked best when problems are solved democratically rather than autocratically. Content aggregators already allow for this to some extent in what content is presented, but moderation remains quite undemocratic. I think it may be that a new platform with new innovations to make moderation decisions more driven by community consensus instead of owners or founders of communities will be needed. Exactly what this will look like, I don’t know, but some brainstorming might be in order for the next evolution in social media.

We have decades of proof of chuds brigading and building up hate speech hellfests in these "just let capitalism decide" laissez-faire models.

Moderation free environments just turn places into kiwi farms.

I obviously didn’t explain myself clearly if that’s what you took from this. I’m saying the community should be in control of moderation, not that there should be no moderation.

It's a cute idea but in practice, very, very few users want to deal with content moderation. The far majority of users just want to consume good, moderated content without worrying about removing bad content. Getting people to volunteer as mods is already hard enough. Making it democratic will not help I think.

Also, mob rule is not always the best. It is not uncommon for totally reasonable takes to be down voted - sometimes just because it started getting down voted and then others went on the bandwagon.

The way to achieve democracy in communities is not by making moderation democratic, but to make community switching easy. So if you don't like your community mods, you can easily go elsewhere. That is also a kind of democracy I would say.

Maybe a middle ground could be moderator elections. At least then it's a representative democracy and it would largely work as it already did. But again, very few people volunteer as mod so I believe you'll find that the mods you could vote for would be very, very few mods (potentially just 1).

All moderator elections would do is let chuds stack the ballot. Look up shit like the sad puppies debacle.

The answer is that a site needs to decide what its rules are and then moderators need to enforce those rules, regardless of how the community feels. Which, ironically, is what ml is doing (even if they don't publicize those rules). And if the community dislikes the rules, you disassociate with them.

The issue with the fediverse is that you need to defederate or else you are tacitly approving of their bullshit.

Nobody is saying that there should be no moderation at all. What we are saying is that lemmy.ml moderators tend to remove users and content that are seen as even mildly critical of China, Russia, or Marxism-Leninism, and then sometimes hide the evidence of the removals from the modlog. That's not acceptable to many people, including me.

Kiwi farms?

https://www.wired.com/story/keffals-kiwifarms-cloudflare-blocked-clara-sorrenti/

My first idea would be to have users report posts and ping a random sample of like 20 active and currently online users of the community and have them decide (democratically). That way prevents brigading and groups collectively mobbing or harassing other users. It'd be somewhat similar to a jury in court. And we obviously can't ask everyone because that takes too much time, and sometimes content needs to be moderated asap.

But then if you report something nobody should see, say CP for example, you're suddenly subjecting 20 random people into seeing it.

Right but you could have filters to opt out of mod requests or certain types of mod requests. It could even be opt-in, with some trust level requirement before you're included.

Also "CSAM" is a better term, because putting "porn" in the name focuses on the intended use by abusers, whereas the term "CSAM" focuses on the victims.

I see. I had noticed people using that term instead, but I never knew why. Thanks for the info.

Why is that at all important?

Because calling it 'porn' makes it sound appealling to people who associate porn with sexy things. It makes it sound like something they might want to seek out. It also demeans the victim by rhetorically placing them as the subject of pornography which can contribute to the damage.

Calling it "child sexual abuse material" centres the victim and puts distance between this material and pornography that was made consensually and ethically.

In a similar vein, "revenge porn" should be called sexual abuse material as well. And in fact a lot of technically legal "porn" would fall into the category of sexual abuse material if the full circumstances of its production was made known, but in the case of children the distinction is unambiguous.

This comment is kind of fascinating because it's essentially reinventing Slashdot's metamoderation system 25 years later.

It was good then. No reason it wouldn't work again today.

Basic sortition method, I think that has a lot of merit.

The problem is that someone has to host the data. That will always be true. Even in the cloud, someone has to own the servers that the data are on. The only solution I can see is something basically like what we have the fediverse, only where other instances are sharing copies of the same community and the posts on it, kind of preventing one place from having ownership of it. But then if the instance goes down or gets defederated, I suppose you'd still have different versions of it floating around, plus the problem of someone posting CSAM and it getting pushed to all the instances and stuff.

Still, I agree that I think the closest solution is going to be something like the fediverse we're seeing now. I just don't know how to solve the problem of overzealous mods still, because we need mods. Having some democratic control over modding seems dangerous, too. Imagine a place gets brigaded by The Donald at 3am and everyone wakes up to their community being totally different. Or sometimes people are just wrong, like we have the problem of pre October 7th where 99% of the US believed Israel was a golden angel thanks to propaganda and dismissed all criticism. Same thing with socialism in the 50's, or racism. Democratic modding at the time would lead to MLK getting banned lol.

It's definitely an issue, because the ml mod and mods like them are way too trigger happy on the bans, but it'll probably take some time to think of good solutions, and lots of experimenting with new forms of social media, like the fediverse.

Regarding server ownership, yes that is a thorny problem. One could dream up some kind of communally owned server system but that might be far fetched. However, I think the issue of mod power is distinct and might be easier to address.

As for the flaws of democratic systems, yes, they are real but most of these flaws apply to more autocratic systems as well. And we see from numerous examples that more democratic systems tend to abuse their power less often and severely than autocratic ones do. It’s a higher bar to get the whole community together to ban MLK than just one racist mod. Carefully thought out governance structures can also help. You certainly don’t want 51% of the community to be able to ban 49% whenever they want to, but the ideal would be to enable easy involvement with a structure that guides users towards making the right decisions.

The structure and culture to make this work could be difficult to build, so I’m not saying it’s an easy answer but it does seem like something to consider and maybe experiment with.