this post was submitted on 30 Mar 2024

1567 points (97.7% liked)

linuxmemes

21263 readers

1031 users here now

Hint: :q!

Sister communities:

- LemmyMemes: Memes

- LemmyShitpost: Anything and everything goes.

- RISA: Star Trek memes and shitposts

Community rules (click to expand)

1. Follow the site-wide rules

- Instance-wide TOS: https://legal.lemmy.world/tos/

- Lemmy code of conduct: https://join-lemmy.org/docs/code_of_conduct.html

2. Be civil

- Understand the difference between a joke and an insult.

- Do not harrass or attack members of the community for any reason.

- Leave remarks of "peasantry" to the PCMR community. If you dislike an OS/service/application, attack the thing you dislike, not the individuals who use it. Some people may not have a choice.

- Bigotry will not be tolerated.

- These rules are somewhat loosened when the subject is a public figure. Still, do not attack their person or incite harrassment.

3. Post Linux-related content

- Including Unix and BSD.

- Non-Linux content is acceptable as long as it makes a reference to Linux. For example, the poorly made mockery of

sudoin Windows. - No porn. Even if you watch it on a Linux machine.

4. No recent reposts

- Everybody uses Arch btw, can't quit Vim, and wants to interject for a moment. You can stop now.

Please report posts and comments that break these rules!

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

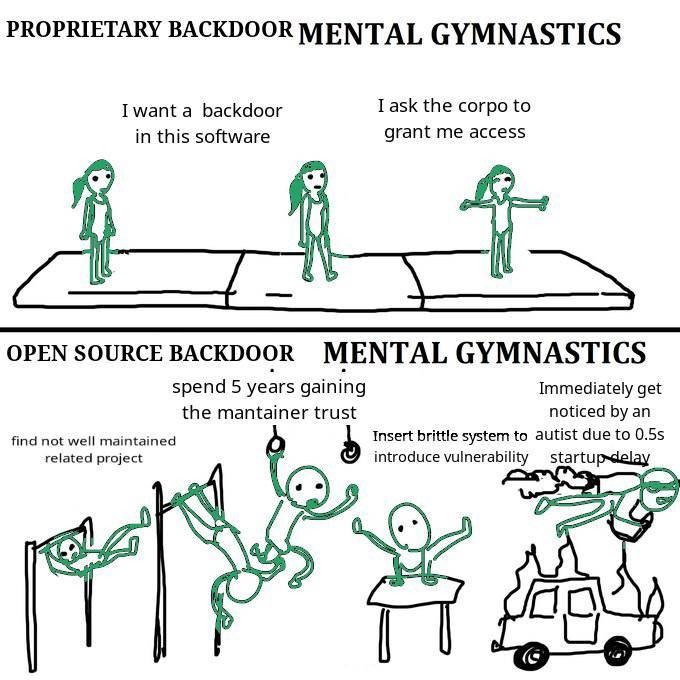

The problem I have with this meme post is that it gives a false sense of security, when it should not.

Open or closed source, human beings have to be very diligent and truly spend the time reviewing others code, even when their project leads are pressuring them to work faster and cut corners.

This situation was a textbook example of this does not always happen. Granted, duplicity was involved, but still.

100%.

In many ways, distributed open source software gives more social attack surfaces, because the system itself is designed to be distributed where a lot of people each handle a different responsibility. Almost every open source license includes an explicit disclaimer of a warranty, with some language that says something like this:

Well, bring together enough dependencies, and you'll see that certain widely distributed software packages depend on the trust of dozens, if not hundreds, of independent maintainers.

This particular xz vulnerability seems to have affected systemd and sshd, using what was a socially engineered attack on a weak point in the entire dependency chain. And this particular type of social engineering (maintainer burnout, looking for a volunteer to take over) seems to fit more directly into open source culture than closed source/corporate development culture.

In the closed source world, there might be fewer places to probe for a weak link (socially or technically), which makes certain types of attacks more difficult. In other words, it might truly be the case that closed source software is less vulnerable to certain types of attacks, even if detection/audit/mitigation of those types of attacks is harder for closed source.

It's a tradeoff, not a free lunch. I still generally trust open source stuff more, but let's not pretend it's literally better in every way.

Totally agree.

All the push back I'm getting is from people who seem to be worried about open source somehow losing a positive talking point, when comparing it to close source systems, which is not my intention (the loss of the talking point). (I personally use Fedora/KDE.)

But sticking our heads in the sand doesn't help things, when issues arise, we should acknowledge them and correct them.

An example of what you may be speaking about, indirectly. We can only hope that maintainers do due diligence, but it is volunteer work.

There are two big problems with the point that you're trying to make:

There are many open source projects being run by organizations with as much (often stronger) governance over commit access as a private corporation would have over its closed source code base. The most widely used projects tend to fall under this category, like Linux, React, Angular, Go, JavaScript, and innumerable others. Governance models for a project are a very reasonable thing to consider when deciding whether to use a dependency for your application or library. There's a fair argument to be made that the governance model of this xz project should have been flagged sooner, and hopefully this incident will help stir broader awareness for that. But unlike a closed source code base, you can actually know the governance model and commit access model of open source software. When it comes to closed source software you don't know anything about the company's hiring practices, background checks, what access they might provide to outsourced agents from other countries who may be compromised, etc.

You're assuming that 100% of the source code used in a closed source project was developed by that company and according to the company's governance model, which you assume is a good one. In reality BSD/MIT licensed (and illegally GPL licensed) open source software is being shoved into closed source code bases all the time. The difference with closed source software is that you have no way of knowing that this is the case. For all you know some intern already shoved a compromised xz into some closed source software that you're using, and since that intern is gone now it will be years before anyone in the company notices that their software has a well known backdoor sitting in it.

None of what I'm saying is unique to the mechanics of open source. It's just that the open source ecosystem as it currently exists today has different attack surfaces than a closed source ecosystem.

At a certain point, though, that's outsourced to trust whoever someone else trusts. When I trust a specific distro (because I'm certainly not rolling my own distro), I'm trusting how they maintain their repos, as well as which packages they include by default. Then, each of those packages has dependencies, which in turn have dependencies. The nature of this kind of trust is that we select people one or two levels deep, and assume that they have vetted the dependencies another one or two levels, all the way down. XZ did something malicious with systemd, which opened a vulnerability in sshd, as compiled for certain distros.

Not at all. I'm very aware that some prior hacks by very sophisticated, probably state sponsored attackers have abused the chain of trust in proprietary software dependencies. Stuxnet relied on stolen private keys trusted by Windows for signing hardware drivers. The Solarwinds hack relied on compromising plugins trusted by Microsoft 365.

But my broader point is that there are simply more independent actors in the open source ecosystem. If a vulnerability takes the form of the weakest link, where compromising any one of the many independent links is enough to gain access, that broadly distributed ecosystem is more vulnerable. If a vulnerability requires chaining different things together so that multiple parts of the ecosystem are compromised, then distributing decisionmaking makes the ecosystem more robust. That's the tradeoff I'm describing, and making things spread too thin introduces the type of vulnerability that I'm describing.

Forgot to ask, but I would love to hear your thoughts on what @5C5C5C@programming.dev has commented about this subject: https://lemmy.world/comment/9003210

In the broader context of that thread, I'm inclined to agree with you: The circumstances by which this particular vulnerability was discovered shows that it took a decent amount of luck to catch it, and one can easily imagine a set of circumstances where this vulnerability would've slipped by the formal review processes that are applied to updates in these types of packages. And while it would be nice if the billion-dollar-companies that rely on certain packages would provide financial support for the open source projects they use, the question remains on how we should handle it when those corporations don't. Do we front it ourselves, or just live with the knowledge that our security posture isn't optimized for safety, because nobody will pay for that improvement?