this post was submitted on 14 Feb 2025

39 points (100.0% liked)

LocalLLaMA

2585 readers

7 users here now

Community to discuss about LLaMA, the large language model created by Meta AI.

This is intended to be a replacement for r/LocalLLaMA on Reddit.

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

Fair enough. I've got a 3060 and 4060ti installed, and I need to buy a new PSU to power up a 1080ti externally.

I think the only thing I use that requires CUDA is Daz Studio, and I'm actually starting to lose interest in that.

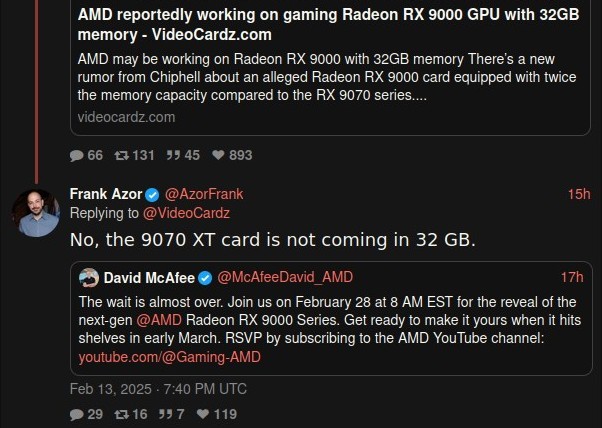

The thing about Radeon is it's not just less money, it's also less performance, plus more work to set up. I bought a Radeon laptop just so I could try ROCm. It works, but it's no walk in the park. And when you're done, you don't get any benefit over using Nvidia. If AMD at least gave us more VRAM, that would be something.