Stable Diffusion

4301 readers

12 users here now

Discuss matters related to our favourite AI Art generation technology

Also see

Other communities

founded 1 year ago

MODERATORS

1

2

3

12

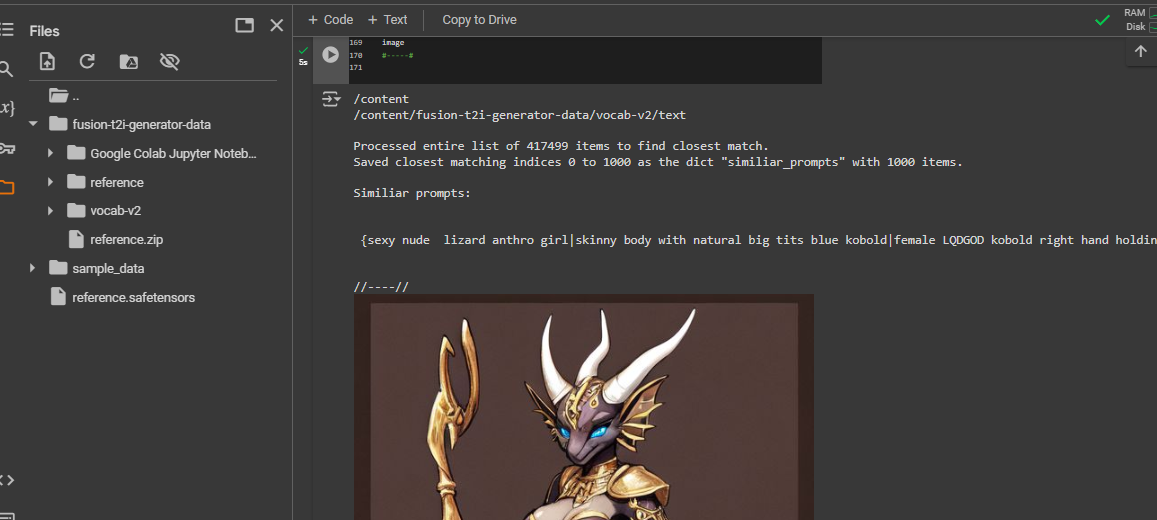

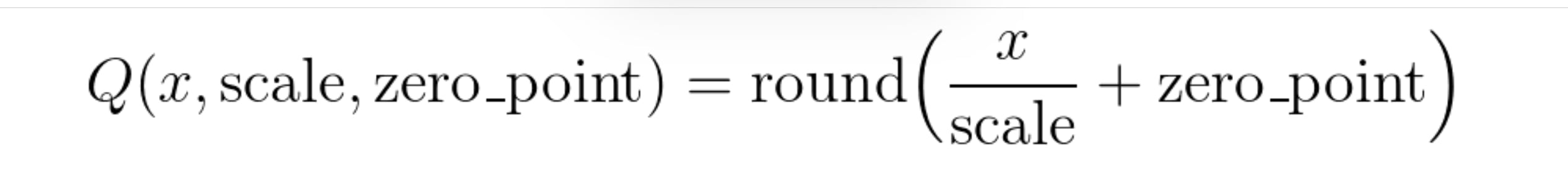

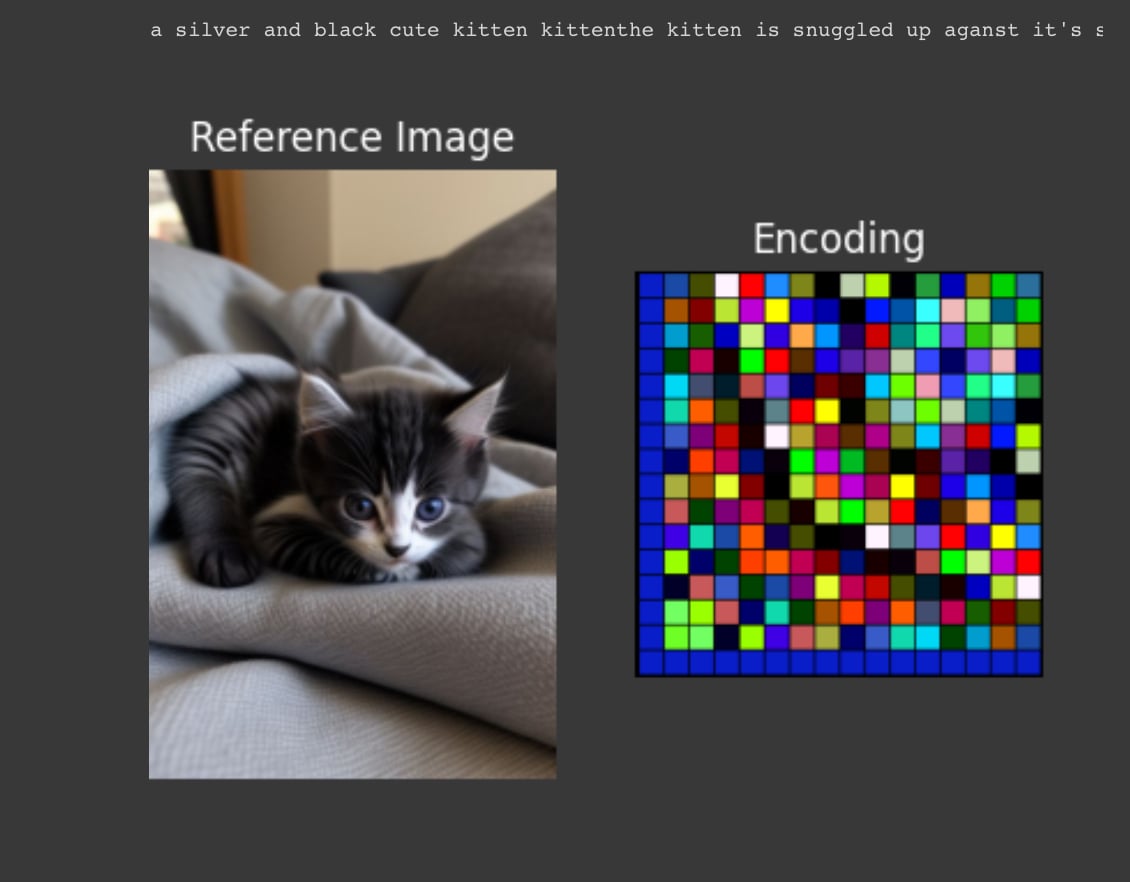

SVDQuant: Absorbing Outliers by Low-Rank Components for 4-Bit Diffusion Models

(cdn.prod.website-files.com)

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

11

Acly/krita-ai-diffusion: Version 1.26.0 Custom ComfyUI Node Graphs From Within Krita

(www.youtube.com)

22

24

25

view more: next ›